Japan’s “bits-to-watts” data center trial is a scaling lesson for Singapore startups. Learn how to shift AI workloads, cut peaks, and expand across APAC.

AI Data Center Load Shifting: Lessons for SG Startups

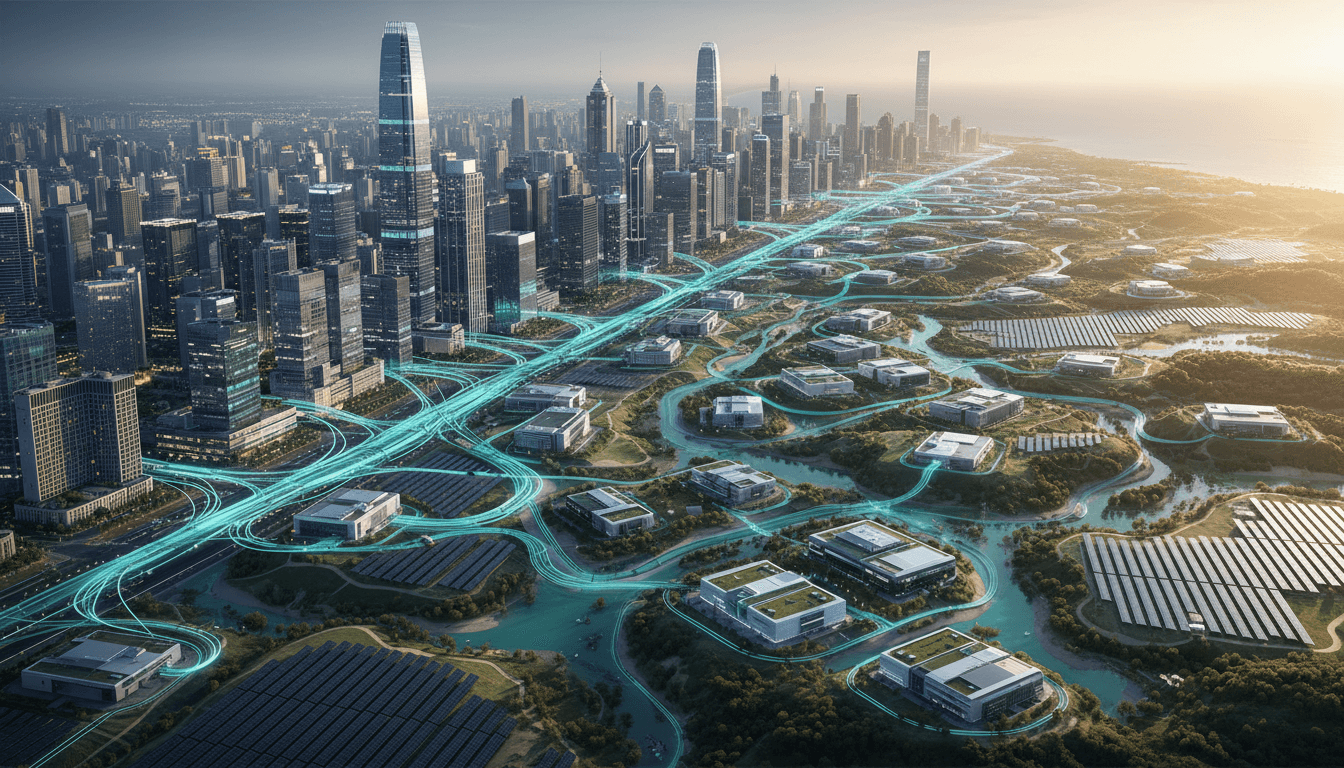

Japan’s next data-center play isn’t another mega-building outside Tokyo. It’s a networking experiment: connect data centers across regions with fiber so compute can “move” to wherever electricity is available.

That idea—often described as matching “bits to watts”—matters far beyond Japan’s power grid. If you’re building an AI product in Singapore and thinking about scaling across APAC, you’re facing the same core problem in a different form: demand is spiky, resources are unevenly distributed, and building “more capacity” is slow and expensive.

This post sits within our “AI dalam Logistik dan Rantaian Bekalan” series, where we usually talk about routes, warehouses, and demand forecasting. But the infrastructure behind AI is now part of the supply chain story. If your models optimize trucking routes while your compute costs explode, you haven’t actually optimized the system.

What Japan is testing (and why it’s a big deal)

Japan will start a trial (spring 2026) to connect data centers in different regions using fiber optic cables, then shift processing workloads from constrained areas (notably around Tokyo) to regions with excess power capacity. The Ministry of Internal Affairs and Communications is leading the effort as part of its Watt-Bit Collaboration initiative—an attempt to integrate power and communications infrastructure.

The core bet is straightforward: new power infrastructure takes years and costs a fortune. But shifting compute—especially batch, non-urgent, or geographically flexible workloads—can reduce peak electricity pressure in dense hubs without waiting for new transmission lines.

Two details from the announcement are especially relevant:

- Regional imbalance is real: Kyushu can have abundant solar generation with relatively stable local demand, while the Tokyo area has a concentration of data centers and a tighter power situation.

- The target timeline is long-term: The system is aimed for real-world use by the late 2030s, because low-latency networking, coordination across operators, and operational standards are hard.

Here’s the stance I’ll take: they’re right to start now. AI workloads are growing faster than most grids can comfortably absorb, and “just build more” is rarely the fastest solution.

“Bits to watts” is a supply chain concept in disguise

In logistics, everyone understands that you don’t fix congestion by building a new highway every time there’s traffic. You:

- reroute,

- smooth demand,

- use alternative nodes,

- and improve visibility so decisions happen early.

Japan is applying that same thinking to AI infrastructure.

The real mechanism: visibility + orchestration

Load shifting only works when two conditions are true:

- You can see supply and demand in near real-time (power availability by region, compute demand by service).

- You can orchestrate workloads fast enough (and safely enough) across different facilities.

That’s why the trial focuses on whether it’s possible to instantaneously assess local power supply and data-center processing demand and then distribute work optimally—plus whether low-latency communications across different equipment types is even feasible.

In supply chain terms, it’s control-tower logic:

“Optimization isn’t a one-time plan; it’s continuous decisions made with fresh constraints.”

Why AI makes this urgent

AI workloads—especially training and inference at scale—create bursty, high-density power demand. Even if your product is “logistics AI” (route optimization, warehouse automation, demand forecasting), the training cycles, peak inference times, and customer SLAs can cause compute spikes that translate directly into energy spikes.

So the infrastructure layer becomes part of your operational risk.

What Singapore startups should learn when scaling across APAC

Singapore startups expanding into Japan, Indonesia, Malaysia, or Australia often focus on GTM first: partners, pricing, local language, compliance. All important. But Japan’s move highlights a quieter reality:

Scaling across regions is an infrastructure planning problem as much as a marketing problem.

1) Don’t over-index on a single “hub” region

Japan’s Tokyo cluster is a warning sign. Centralizing everything feels efficient—until it isn’t.

For startups, the equivalent is:

- running all inference in one cloud region,

- putting all data pipelines through one warehouse,

- relying on one ad channel or one distribution partner.

When demand grows, you hit hard limits: latency, cost, regulatory friction, or capacity constraints.

A better approach is to design for a multi-node operating model early:

- primary region for real-time inference

- secondary region(s) for batch jobs and non-urgent training

- clear failover patterns for outages and price spikes

2) Treat energy and compute like constrained inventory

In AI-driven logistics and rantaian bekalan, you already model constraints: fleet capacity, warehouse slots, driver hours.

Apply the same thinking to compute:

- Compute budget (GPU hours) is inventory.

- Energy availability is a supply constraint.

- Latency tolerance is the service level.

If a workload can tolerate higher latency (say, nightly demand forecasting or end-of-day route re-optimization), that workload is a candidate for “regional shifting.”

Even if you’re not literally moving workloads based on power prices yet, simply classifying workloads by urgency is a high-ROI habit.

3) Build a “latency map” for your product

Japan’s project explicitly tests low-latency feasibility across different equipment and locations. For a startup, you don’t need experimental fiber networks—but you do need to know what latency does to your product.

Create a simple latency map:

- Which actions must be <100ms? (e.g., warehouse robotics control loops)

- Which can be seconds? (e.g., driver assignment updates)

- Which can be minutes or hours? (e.g., demand forecasting model refresh)

This becomes the basis for regional architecture, data residency planning, and cost control.

How this connects to AI in logistics and supply chain (practical examples)

If you’re working on AI dalam logistik dan rantaian bekalan, you’ve probably got at least one of these in your roadmap:

Route optimization: separate “real-time” from “planning” compute

- Real-time re-routing during disruptions needs low latency and high availability.

- Weekly network redesign or scenario simulations can run in cheaper regions or off-peak hours.

Actionable play: schedule heavy simulations when your cloud offers lower spot pricing or when your chosen region has lower demand, then keep real-time services lightweight.

Warehouse automation: keep control local, push learning global

- Control systems (picking, sorting) need local reliability.

- Model training can be centralized or distributed, depending on data governance.

Actionable play: deploy edge inference for vision models on-site, but shift model retraining to a region optimized for cost/power—assuming your data policy allows it.

Demand forecasting: design for batch shifting

Forecasting is often a batch job with flexible timing.

Actionable play: run batch forecasting in a secondary region, store outputs in a replicated datastore, and serve forecasts locally.

This isn’t just about saving money. It’s about resilience: fewer surprises when your primary region gets congested.

A simple “bits-to-watts” playbook for startups (you can start this quarter)

You don’t need to wait for late-2030s infrastructure. You can adopt the principle now.

Step 1: Tag workloads by SLA and latency tolerance

Use three buckets:

- Immediate (real-time inference, control)

- Near-real-time (seconds to minutes)

- Batch (hours)

Step 2: Choose a multi-region pattern on purpose

Common patterns that work for early-stage teams:

- Active–passive: one primary region, one standby

- Active–active for read-heavy services: replicate data and serve locally

- Split by workload: inference in-region, training/batch elsewhere

Step 3: Instrument cost and carbon as first-class metrics

If you’re selling to enterprises, this helps with procurement. If you’re selling to SMEs, it still helps margins.

Track:

- cost per 1,000 predictions

- GPU hours per model refresh

- peak vs average utilization

If you can’t measure it, you can’t shift it.

Step 4: Plan for the “regional reality” of APAC

APAC isn’t one market. Power reliability, cloud region availability, cross-border data rules, and latency vary widely.

Your expansion plan should include:

- where data must live

- where inference must run

- where batch compute is cheapest and safest

This is infrastructure planning, but it directly affects your ability to win deals.

People also ask: does load shifting hurt latency and reliability?

It can—if you shift the wrong workloads. Load shifting works when you’re moving workloads that are tolerant to delay or can be parallelized. It fails when you move tightly coupled, low-latency services without redesigning the system.

A rule I’ve found useful:

“Shift the compute that produces tomorrow’s advantage, not the compute that runs today’s operations.”

In logistics AI terms, keep operational control close to the edge; move analytics and training to where capacity is abundant.

What to watch next (Japan, Singapore, and the regional trend)

Japan’s trial is a signal: governments and operators are preparing for an AI-heavy future where energy and connectivity are co-optimized.

Singapore startups should pay attention because:

- AI adoption is accelerating across logistics, retail, manufacturing, and finance.

- Data center and grid constraints are increasingly part of the conversation across the region.

- Enterprise buyers are asking tougher questions about resilience, cost predictability, and sustainability.

If you can explain your architecture and operations in a “supply chain” way—nodes, constraints, rerouting, redundancy—you’ll sound like a team that’s ready to scale.

The reality? It’s simpler than it sounds: classify workloads, design multi-region intentionally, and use metrics to decide what moves.

Where could your product tolerate a slower response in exchange for lower cost and higher resilience—and where absolutely not?