Prefab AI data centre infrastructure helps Singapore firms scale AI faster with predictable power, cooling, and deployment timelines.

Prefab AI Data Centres: Faster AI Rollouts in Singapore

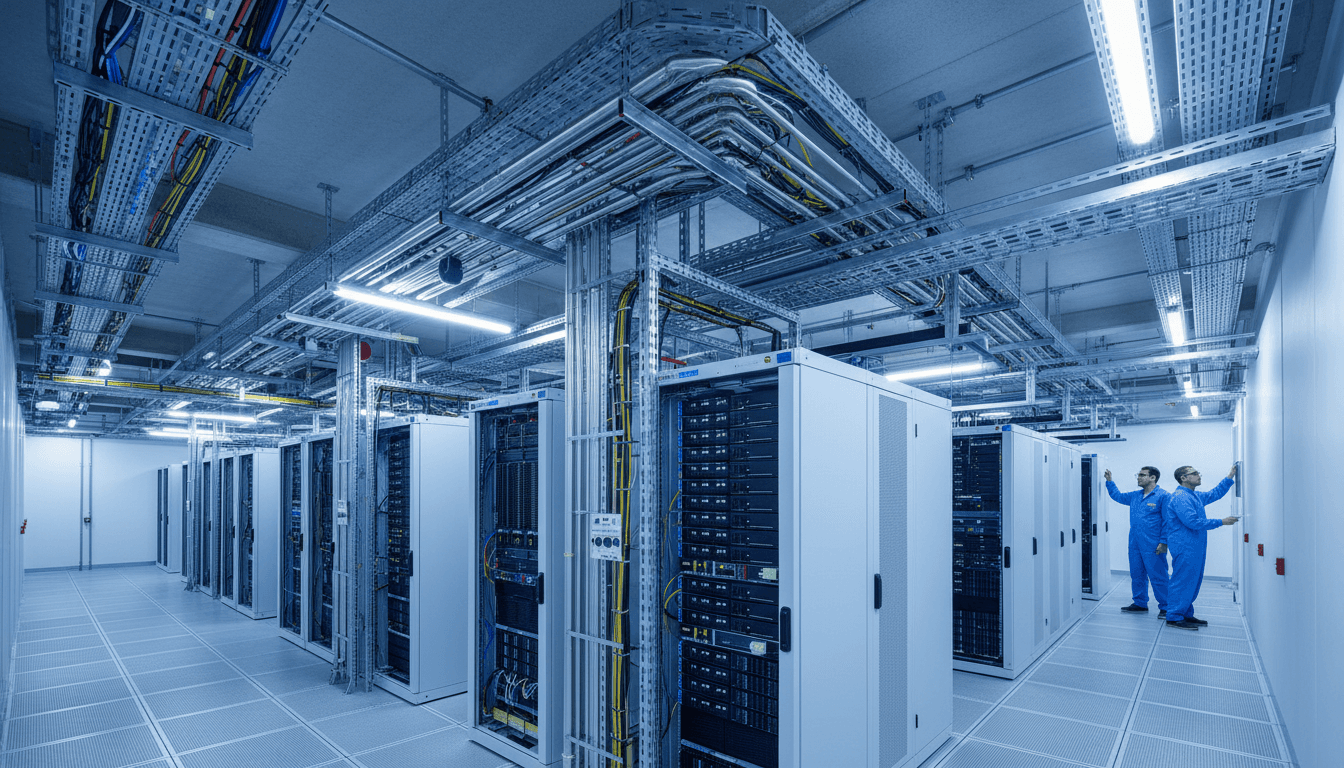

AI pilots don’t usually fail because the model is “not smart enough.” They fail because the underlying infrastructure can’t keep up—power draw spikes, cooling becomes the bottleneck, and deployment schedules stretch from weeks into quarters. That’s why Vertiv’s late-January launch of SmartRun, a prefabricated overhead infrastructure system for AI data centres, matters far beyond the data centre industry.

For this AI Business Tools Singapore series, the point is simple: faster, more predictable AI infrastructure translates into faster, more reliable AI adoption in business—from customer service automation to marketing personalisation to real-time analytics in operations. When compute is scarce or delayed, every “AI business tool” hits a ceiling.

Prefabricated AI data centre infrastructure: what it actually changes

Prefabricated overhead infrastructure changes the pace and risk profile of AI capacity expansion. Instead of building power distribution, cooling, containment, and network cabling as separate on-site projects, SmartRun packages them into a single modular design that’s installed as an integrated unit.

Here’s the practical shift: less on-site coordination, fewer installation sequences, fewer handoffs. Traditional “stick-build” data hall work tends to slow down at exactly the wrong time—when you need to scale quickly for AI training, inference, and HPC workloads.

Vertiv positions SmartRun as a response to three pressures hitting operators across Asia:

- Construction timelines are now part of the competitive equation.

- Skilled labour shortages make parallel build-outs harder.

- Cooling constraints rise sharply as rack densities increase.

And one detail from the announcement is worth highlighting because it’s so operationally concrete: Vertiv claims SmartRun can support deployments “of more than 1MW per day with a single crew” (site-dependent, but directionally meaningful). Translate that into business language: your capacity plan becomes something you can schedule, not something you gamble on.

Why Singapore businesses should care (even if you don’t run a data centre)

Singapore companies feel data centre constraints indirectly—through cost, lead time, and availability of AI-ready capacity. Most businesses aren’t installing liquid cooling loops themselves. But they are deciding whether to:

- keep workloads on shared cloud GPU pools,

- reserve dedicated capacity via colocation or managed services,

- or build private AI clusters for regulated data.

When AI-ready capacity takes too long to come online, you see knock-on effects:

- AI product roadmaps slip. Customer-facing features (recommendations, smart search, fraud detection) depend on predictable inference capacity.

- Costs rise. Scarcity pricing appears in GPU instances, premium hosting, and expedited build-outs.

- Security and compliance decisions get distorted. Teams may keep sensitive workloads in suboptimal environments because migrating would cause downtime.

My take: in 2026, “time-to-compute” is becoming as important as time-to-market. Singapore’s AI leaders will be the ones who treat infrastructure as part of the business plan, not a procurement afterthought.

The real bottleneck for AI data centres: cooling, not just power

Liquid cooling is moving from specialist setups to mainstream AI data centre design. As AI servers push higher thermal loads, air cooling alone becomes inefficient or impractical—especially at sustained high utilisation.

The challenge isn’t only adding cooling capacity. It’s the messy reality of retrofits:

- additional piping and secondary fluid networks,

- coordination across multiple vendors,

- disruptions in live environments,

- and a larger operational surface area to monitor.

SmartRun’s notable design choice is that it integrates prefabricated stainless-steel liquid cooling piping into an overhead system alongside power distribution, hot-aisle containment, and network infrastructure. That bundling matters because it reduces “interfaces”—the places where projects fail due to mismatched specs, installation sequencing, or vendor blame games.

A simple rule for buyers: densities drive architecture

As rack density climbs, the facility architecture changes. If you’re a Singapore business scoping AI infrastructure (even via a partner), don’t accept vague statements like “we’re AI-ready.” Ask for specifics:

- What rack densities are supported today (kW per rack)?

- Is liquid cooling available now, or “roadmapped”?

- How is coolant distribution handled (overhead vs underfloor)?

- What’s the standard deployment unit (row, pod, module)?

If the answers are fuzzy, timelines and costs will be fuzzy too.

Speed is now a feature: modular build-outs and predictable delivery

The strongest argument for prefabrication isn’t elegance—it’s repeatability. AI data centres increasingly resemble manufacturing lines: standard modules, consistent installs, predictable commissioning.

That’s why prefabricated infrastructure is showing up more often in Asia’s AI build-outs. It deals directly with what slows projects down:

- too many site-specific design variations,

- dependency on scarce specialist labour,

- and sequential installation steps.

What this means for AI adoption in marketing, ops, and customer engagement

If you’re leading AI adoption inside a Singapore company, the infrastructure conversation can feel far removed from your day-to-day—until it isn’t. Here’s how modular capacity shows up in practical outcomes:

- Marketing personalisation: If inference capacity is constrained, teams batch jobs overnight and ship “personalised” messages that are already stale. More predictable capacity enables near-real-time segmentation and offers.

- Customer engagement (contact centre AI): Voice and chat assistants need low-latency inference and high availability. Capacity delays force compromises—smaller models, lower context windows, or aggressive rate limits.

- Operations analytics: Demand forecasting and anomaly detection often require frequent retraining. If training capacity is hard to secure, retraining cadence slips and accuracy drops.

A useful internal metric I’ve found: “days from approved use-case to stable production inference.” Infrastructure delays are usually the hidden driver behind poor numbers.

Vertiv’s broader signal: integrated systems + services is the new default

SmartRun isn’t just a product announcement; it signals how vendors think buyers want to buy. Instead of separate power, cooling, and network components, the market is shifting toward integrated systems supported by long-term services.

That makes sense because AI facilities are harder to operate, especially when liquid cooling becomes normal. Cooling performance isn’t a one-time design win; it’s an ongoing tuning exercise—monitoring flow, temperatures, efficiency, and failure modes.

Vertiv’s positioning includes support from its liquid cooling and services teams (installation, maintenance, optimisation). Whether you buy from Vertiv or not, the direction is consistent: operators want fewer vendors and clearer accountability.

Snippet-worthy reality: In AI data centres, “integration” is risk management. It reduces schedule risk, commissioning risk, and operational risk.

Practical checklist: how to evaluate “AI-ready” capacity in Singapore

If you’re sourcing AI infrastructure (directly or through a provider), evaluate it like a production system—not a one-off IT project. Use this checklist to structure your RFPs and partner conversations.

1) Deployment speed and predictability

- What’s the typical lead time from PO to live capacity?

- Is the build modular (row/pod-based) with repeatable designs?

- What are the dependencies on specialised labour on-site?

2) Cooling capability (present, not promised)

- Air cooling only, hybrid, or liquid cooling supported?

- Is liquid cooling distribution already installed in the module?

- How is hot-aisle containment implemented?

3) Power distribution and scaling

- Can the design support higher rack densities without redesign?

- What’s the realistic daily/weekly expansion rate once started?

- Are monitoring and failover plans documented?

4) Operational support model

- Who owns commissioning and acceptance testing?

- What’s the maintenance plan (and SLA) for cooling components?

- Is there a single throat to choke when performance degrades?

5) Business alignment (the part most teams skip)

- Map capacity increments to use-cases (customer service, marketing, fraud).

- Decide what must be low latency (edge/near-edge) vs what can be batch.

- Define “production-ready” for your AI tools: uptime, latency, data residency, audit logs.

This is where the AI Business Tools Singapore lens matters: tools don’t create advantage if the runtime can’t scale.

People also ask: do prefab systems only matter for hyperscalers?

No. Prefab infrastructure matters even more for mid-market and enterprise buyers because it reduces complexity. Hyperscalers can throw teams at problems and absorb schedule slips. Most Singapore businesses can’t.

Prefab systems make it easier for colocation providers, managed service partners, and enterprise facilities teams to deliver repeatable AI-ready environments. That translates into more options for buyers—and fewer “custom build” surprises.

Where this goes next: AI capacity as a competitive constraint

By mid-2026, many Singapore firms will compete on their ability to ship AI features reliably, not just prototype them. That shifts the conversation from “which model?” to “which operating model?”—including infrastructure.

Vertiv’s SmartRun launch is one more sign that data centre design is reorganising around AI: higher densities, liquid cooling as a standard requirement, and modular construction to keep schedules realistic.

If you’re building an AI roadmap for marketing, operations, or customer engagement, take a hard look at your compute plan. Is it a line item, or is it a strategy? The companies that answer that well will ship faster—and keep shipping when everyone else is stuck waiting for capacity.