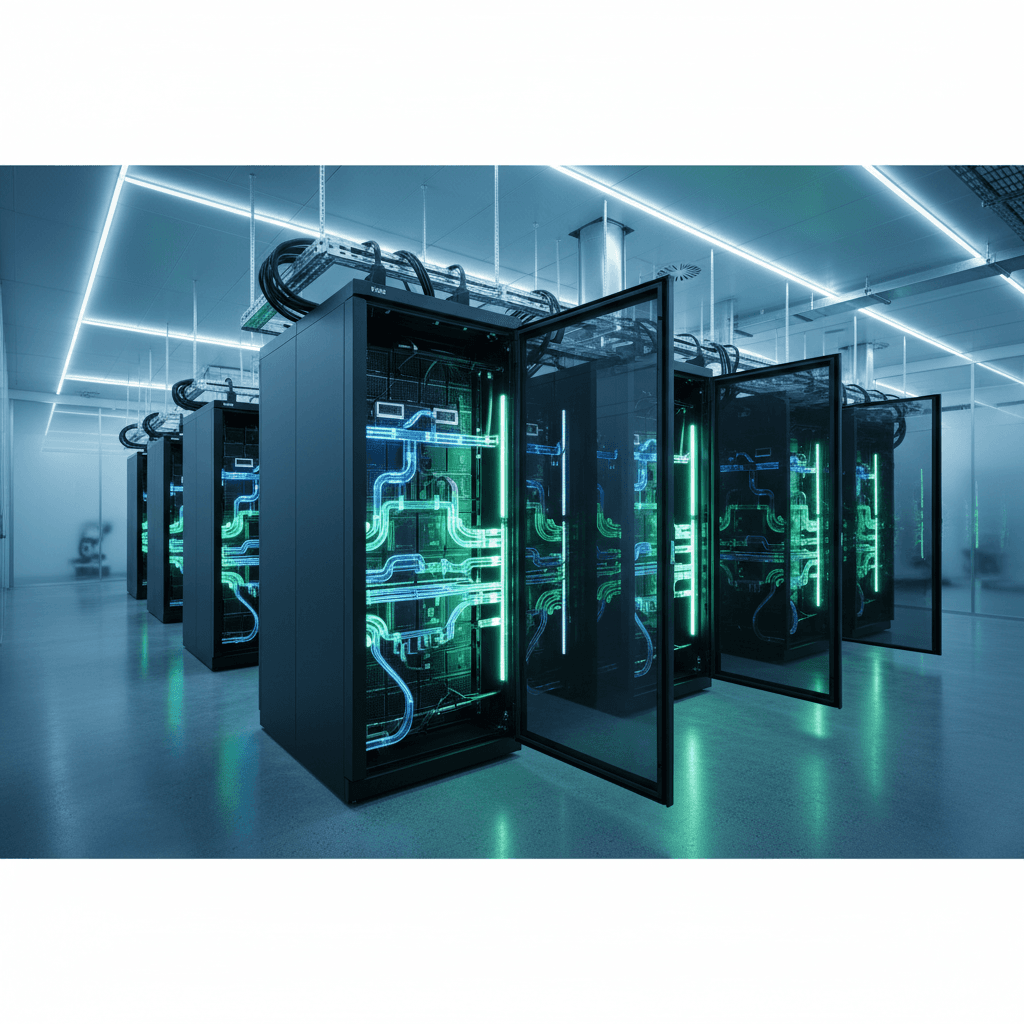

AI racks are racing toward 1 MW each. Microfluidic cooling cools chips from the inside out, cutting energy and water use while boosting performance.

How Microfluidic Cooling Makes AI More Sustainable

Rack power in hyperscale data centers has jumped from around 6 kW to over 270 kW in less than a decade, and designs pushing 480 kW–1 MW per rack are already on the roadmap. That isn’t just an engineering milestone; it’s a sustainability problem.

AI training clusters now draw as much power as small towns. Every extra watt going into a GPU eventually turns into heat that has to be removed. If the industry sticks with conventional air cooling and blunt liquid cooling, the energy, water, and community impact of these “AI factories” will keep spiraling.

Microfluidic cooling offers a different path: cool the chip from the inside out, with far less energy and water, and unlock more performance per watt in the process. For anyone building or buying AI infrastructure as part of a broader green technology strategy, this isn’t a niche curiosity. It’s one of the few levers left that can make AI genuinely sustainable at scale.

Why Traditional Data Center Cooling Is Hitting a Wall

Traditional data center cooling—mostly air, plus increasingly direct-to-chip liquid—simply wasn’t built for dense AI clusters.

The density problem

GPU-accelerated racks used for AI training and inference are pushing into hundreds of kilowatts per rack:

- ~6 kW/rack (typical 2010s enterprise loads)

- ~20–40 kW/rack (modern dense CPU racks)

- 270 kW/rack today for leading AI systems

- 480 kW and even 1 MW/rack within a couple of years, according to Dell’s global industries CTO

Air cooling struggles beyond ~20–30 kW per rack without extreme measures (hot/cold aisle containment, high static pressure fans, specialized CRAC units). Beyond that, you get:

- Skyrocketing fan power and chiller power

- Hotspots that degrade chip reliability

- Design constraints on where you can put high-density racks

From a green technology lens, this is ugly. A large fraction of the facility’s power budget goes just to move air and chill water that ultimately gets vented as waste heat.

The water footprint question

AI isn’t just an energy story; it’s a water story too. Conventional liquid cooling flows large volumes of water or coolant past cold plates to pull heat away.

Current practice is roughly 1.5 liters per minute per kilowatt of chip power. As single chips approach 10 kW, that means 15 L/minute per chip. Multiply that by tens of thousands or millions of accelerators in an AI campus and you hit volumes that understandably worry local communities and regulators.

If green technology is supposed to reduce environmental footprint, that kind of water usage is a non-starter in many regions.

What Microfluidic Cooling Actually Is

Microfluidic cooling flips the traditional approach: instead of pushing a lot of coolant past the outside of a hot chip, it routes tiny streams of coolant directly to the chip’s hottest regions.

Think of it as giving every hotspot on a chip its own tailored “capillary” instead of relying on a chunky, one-size-fits-all heatsink.

From cold plates to microchannels

Companies like Corintis are building this around two main elements:

-

Microfluidic cold plates

Copper plates with an internal network of microscopic channels, as narrow as ~70 micrometers (about the width of a human hair). -

Chip-specific channel design

Simulation software designs a complex web of channels—like arteries, veins, and capillaries—to line up with where the chip actually generates heat.

This is fundamentally different from traditional direct-to-chip liquid cooling, where a relatively simple channel pattern or pin-fin structure sits under the cold plate. Those designs move heat off the package, but they don’t adapt to each chip’s actual thermal map.

The performance and efficiency gains

In joint tests with Microsoft on servers running Teams workloads, microfluidic cooling showed:

- 3x higher heat removal efficiency compared with existing cooling methods

- More than 80% reduction in chip temperature vs. air cooling

Lower chip temperatures translate directly into green technology benefits:

- Higher clock speeds at the same power (or same performance at lower power)

- Lower failure rates and longer hardware lifetimes

- Ability to run warmer coolant temperatures, cutting chiller usage and enabling heat reuse (district heating, industrial processes, etc.)

If you’re chasing both sustainability metrics and total cost of ownership (TCO), that combination is hard to ignore.

How Microfluidics Reduces Energy and Water Use

Microfluidic cooling is fundamentally about doing more with less coolant and less pumping power.

Targeted flow instead of brute force

Traditional liquid cooling often assumes a uniform heat load: you flood the plate with enough coolant to safely handle the worst-case hotspot. In practice, chips are uneven:

- Some regions barely warm up

- Others run near thermal limits under heavy AI workloads

Microfluidic designs use simulation and thermal emulation to map where heat really appears at millimeter-scale resolution. Then they shape channels and control flows to route coolant precisely to those hotspots.

This does a few things:

- Reduces the total volume of coolant needed per chip

- Cuts pumping power (less volume, optimized pressure drops)

- Avoids overcooling low-activity regions

The result: less water (or other coolant), lower facility energy overhead, and more performance per watt.

Shrinking the facility’s energy overhead

Data centers often talk about Power Usage Effectiveness (PUE) to describe how much overhead is required beyond IT load. A PUE of 1.2 means 20% overhead on top of server power.

Microfluidic cooling supports greener PUE values in two ways:

- Higher coolant temperatures

If chips run 80% cooler than with air, you can safely raise the inlet temperature of your coolant loop. Warmer loops = less chiller work, easier free cooling, and more viable heat reuse.

- Lower pump and fan power

With targeted microchannels and less flow, you don’t need as much pumping horsepower. And because more heat is pulled out in liquid, you can dial back or even remove some air handling.

In practice, I’ve seen teams model this and discover that small improvements in chip-level thermal efficiency ripple out into:

- Smaller or fewer chillers

- Lower-capacity pumps

- Simpler mechanical plants

Which, from a green technology and CapEx perspective, is exactly what you want.

From Cold Plates to Chips: The Next Stage of Microfluidic Cooling

The current generation of microfluidic cooling is already impressive, but the roadmap is bolder: etching cooling channels directly into the chip package, or even into the silicon itself.

Today: advanced cold plates

Right now, Corintis and others are focused on:

- High-volume additive manufacturing of copper cold plates with ultra-fine channels

- Design tools that let chip and system vendors simulate, optimize, and validate cooling layouts

- Thermal emulation platforms that can mimic different AI workloads during testing

Corintis claims it can improve cold plate performance by at least 25% over conventional designs, and it has already produced 10,000+ such plates, targeting 1 million units annually by the end of 2026.

These are drop-in compatible with existing direct-to-chip liquid cooling loops, which makes adoption less painful for operators.

Tomorrow: cooling as part of the chip

The real unlock comes when cooling isn’t an add-on layer, but part of chip design itself.

Right now, the interface between chip and cold plate is a bottleneck: you’ve got layers of packaging material, TIM (thermal interface material), and mechanical tolerances all adding resistance.

By etching microfluidic channels inside the package (or eventually into the silicon stack in 3D chips), you:

- Move coolant within microns of the transistor layers

- Slash thermal resistance

- Open the door to 10x improvements in cooling performance

Corintis is already prototyping this in Switzerland on small production lines, with the goal of handing these concepts to major chip vendors and cold plate manufacturers. That’s exactly the kind of chip-cooling co-design the AI ecosystem has been missing.

For the broader green technology narrative, this is crucial: you don’t get truly sustainable AI if cooling is bolted on after the fact. Thermal performance has to be a first-class design constraint, right next to FLOPS and memory bandwidth.

What This Means for Green AI Strategies

If you’re planning AI capacity for 2025–2030, microfluidic cooling isn’t just “nice to have.” It changes the math on sustainability, cost, and community impact.

For data center operators

Here’s where microfluidics fits into a green technology roadmap:

- Higher rack density with lower PUE: Pack more AI performance into the same footprint without blowing through your energy or cooling budgets.

- Reduced water risk: Use coolant more efficiently and support water-scarce regions with lower draw and higher-temperature loops (better for dry coolers).

- Better heat reuse projects: Higher outlet temperatures make it practical to feed district heating networks or industrial processes.

- Easier ESG storytelling: You can credibly show that you’re not just powering AI with renewable energy but running it efficiently at the silicon level.

If you’re evaluating new AI halls or retrofits, I’d bake microfluidic-capable designs into RFPs now. Even if you start with traditional direct-to-chip, you’ll want the option to upgrade.

For enterprises buying AI capacity

Most enterprises won’t design their own cooling loops, but they can still push for greener AI:

- Ask cloud and colocation providers whether they support chip-specific liquid cooling or microfluidic tech in their AI offerings.

- Prioritize AI services hosted in facilities with low PUE and advanced liquid cooling, not just “renewable-powered” stickers.

- Include cooling efficiency and environmental impact criteria in AI procurement and sustainability reporting.

Your AI strategy, energy strategy, and sustainability targets are converging whether you plan for it or not. Microfluidic cooling gives you a tangible metric to point at.

For chip and system designers

The opportunity is even bigger:

- Co-design chips and cooling to increase performance per watt, not just raw performance.

- Use thermal emulation in early design phases to validate how future AI workloads will interact with microfluidic layouts.

- Differentiate with platforms that let customers hit aggressive ESG goals without sacrificing capability.

The companies that treat thermal design as a core part of green technology—not an afterthought—will have a serious edge as regulations tighten and energy prices stay volatile.

Where Microfluidic Cooling Fits in the Green Technology Story

Green technology isn’t only about wind turbines and solar panels. It’s also about how efficiently we use every watt that those clean energy systems produce.

AI can accelerate climate modeling, grid optimization, materials discovery, and industrial efficiency. But none of that makes sense if the infrastructure running those models wastes energy and water.

Microfluidic cooling sits exactly at that intersection:

- It shrinks the environmental footprint of AI hardware.

- It extends the useful life of chips, reducing e‑waste.

- It cuts energy and water overhead, easing the burden on grids and communities.

If your organization is serious about sustainable AI, it’s time to treat cooling as a strategic topic, not a back-of-house mechanical detail. Ask your vendors hard questions. Push for chip-cooling co-design. And when you evaluate new AI capacity, weigh not just teraflops per dollar, but teraflops per kilowatt and per liter.

The next wave of AI growth will be defined not only by bigger models, but by smarter thermals. Microfluidics is one of the clearest signs of what that future looks like.