AI-powered energy partnerships are becoming essential for U.S. data centers. Learn how AI and renewables can secure power, cut costs, and scale digital services.

AI-Powered Energy Partnerships for U.S. Data Centers

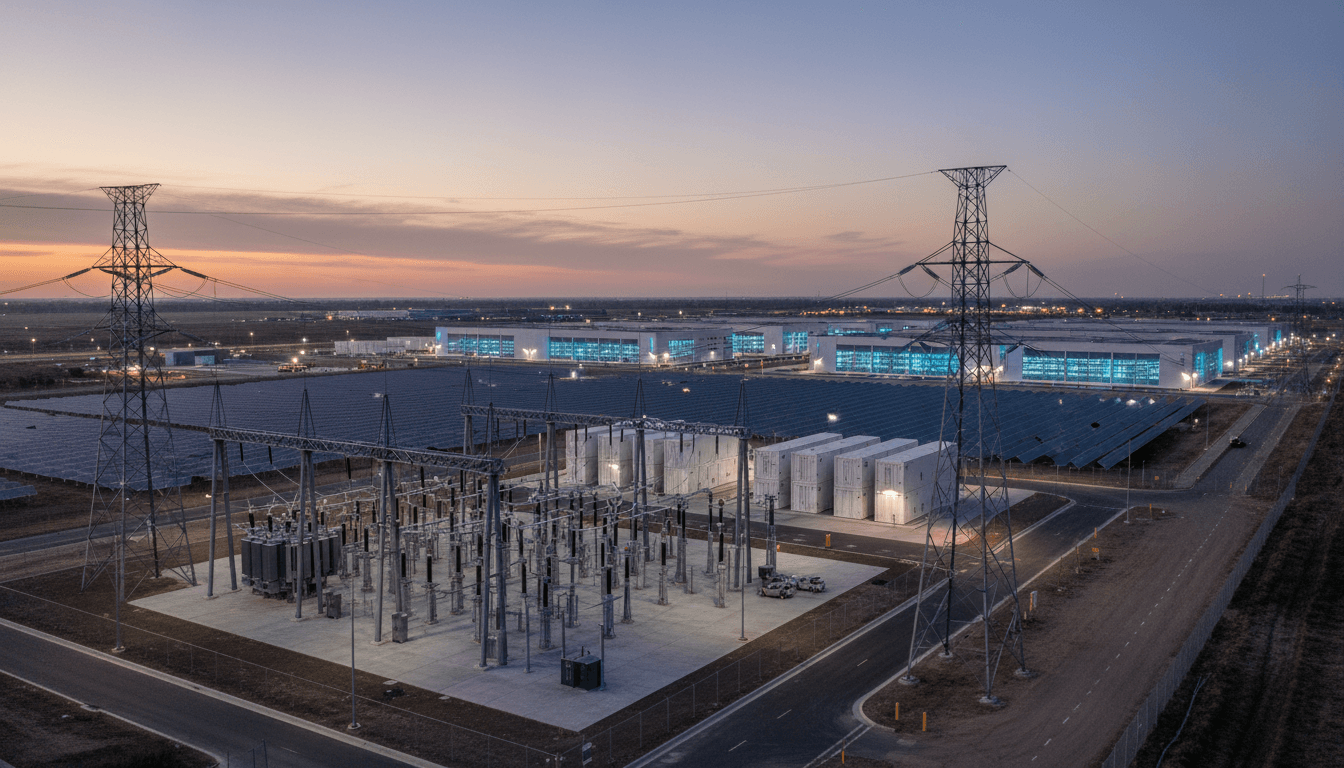

A single large AI data center can draw tens to hundreds of megawatts—roughly the electricity demand of a small city. That reality has turned energy from a back-office utility line item into a front-page strategic constraint for U.S. cloud computing and digital services.

That’s why the reported partnership thread—OpenAI and SoftBank Group partnering with SB Energy—matters even though the original announcement page wasn’t accessible (the source URL returned a 403). The signal is still clear: leading AI labs and capital-heavy tech investors are treating energy supply, grid coordination, and power procurement as core infrastructure for scaling AI.

This post is part of our “AI in Cloud Computing & Data Centers” series, and it makes one point bluntly: the next era of AI progress in the U.S. will be constrained as much by electrons as by algorithms. Strategic partnerships with energy developers are becoming a practical way to keep AI services reliable, affordable, and faster to deploy.

Why AI companies are teaming up with energy developers

Answer first: AI leaders partner with energy firms because power availability and interconnection timelines now gate how quickly new compute capacity can come online.

If you run AI training clusters or high-utilization inference fleets, you don’t just need more GPUs—you need:

- Firm capacity (power you can count on during peak hours)

- Predictable pricing (to keep inference unit economics from swinging wildly)

- Faster site readiness (substations, transmission access, and interconnection queue progress)

The hard constraint: interconnection and time-to-power

In the U.S., adding large new loads often means navigating multi-year planning cycles—utility studies, queue positions, substation upgrades, and permitting. Even when generation is available, getting it to a specific data center location is the bottleneck.

This is where an energy developer like SB Energy becomes strategically interesting. A capable developer brings a portfolio approach—site control, generation pipeline, market expertise, and relationships with transmission operators—that most AI companies don’t want to build from scratch.

The business constraint: AI unit economics are tied to electricity

Electricity is a major component of data center operating cost, and for AI-heavy workloads it’s not a rounding error. High-density racks, advanced cooling, and constant utilization amplify the energy line.

A partnership can make costs more predictable through:

- Long-term power contracts (often PPAs)

- Structured hedging

- Co-development of generation and storage near load

When you’re selling AI-driven digital services at scale, predictability beats heroics.

What this partnership trend means for cloud computing and data centers

Answer first: Expect more “AI + energy” deals that look less like PR and more like infrastructure finance, because compute expansion is now a supply-chain problem.

In the last few years, the data center conversation shifted from “Where can we buy servers?” to “Where can we buy capacity—compute plus power plus cooling plus permits?” That shift is why energy partnerships are moving into the same strategic tier as chip supply agreements.

Data center energy efficiency is no longer enough

Efficiency work still matters—power usage effectiveness (PUE) optimization, airflow management, liquid cooling, workload scheduling. But even well-run facilities hit a ceiling if the grid can’t support incremental load.

So the modern playbook is two-layered:

- Optimize consumption (AI for cooling control, predictive maintenance, capacity planning)

- Secure supply (renewables, storage, firming, transmission planning)

The partnership angle (OpenAI + SoftBank + SB Energy) sits squarely in layer two. It’s about ensuring there’s enough clean, reliable power to support the next wave of AI services.

Why renewables show up in AI infrastructure conversations

For AI and cloud operators, renewables aren’t just a climate story—they’re a deployment story. Utility-scale solar and wind can be built relatively quickly compared with some traditional generation pathways, and they can be paired with battery energy storage systems (BESS) to smooth output.

In practice, data centers need a portfolio:

- Renewables for cost and carbon benefits

- Storage for ramping, peak shaving, and resiliency

- Grid services and demand response for flexibility

- Backup generation for reliability (in some regions)

Energy developers that already manage these assets can accelerate outcomes.

Where AI actually helps in energy (beyond the hype)

Answer first: AI helps energy systems by improving forecasting, dispatch, maintenance, and congestion management, which directly supports data center reliability and cost control.

People often assume “AI in energy” means a smart thermostat. The more consequential applications are industrial and grid-scale. Here are the practical areas where I’ve seen the most measurable impact.

AI forecasting for renewables and load

Accurate forecasting reduces waste and improves grid stability. Models can predict:

- Solar and wind output (using weather and satellite data)

- Local load curves (including data center demand spikes)

- Price volatility in wholesale electricity markets

Better forecasts mean better scheduling—charging batteries when power is cheap and plentiful, discharging during peaks, and reducing imbalance penalties.

AI dispatch and storage optimization

Battery dispatch is a math problem with real money attached. AI and optimization tools help determine:

- When to charge vs. discharge

- How to provide ancillary services (frequency regulation)

- How to support peak shaving for large loads

For a data center operator, even small improvements in dispatch strategy can translate into meaningful cost savings—especially when demand charges and congestion pricing bite.

Predictive maintenance for grid and generation assets

Unplanned outages are expensive, and for AI services they can be reputationally damaging. Predictive maintenance uses sensor data and anomaly detection to reduce failure risk in:

- Inverters and transformers

- Substation equipment

- Cooling systems and power distribution units inside data centers

This is where “AI in cloud computing” and “AI in energy” merge: the same machine learning ops discipline (monitoring, alerting, drift management) is required on both sides.

Congestion and curtailment reduction

Curtailment happens when renewables produce power that can’t be delivered due to transmission constraints. AI can help by recommending operational changes and forecasting congestion windows—useful for scheduling flexible loads.

A practical strategy for data centers is workload shifting:

- Run non-urgent training jobs when renewable output is high

- Shift batch inference to off-peak windows

- Use carbon-aware schedulers that consider marginal emissions

This is a real, operational lever—not a marketing bullet.

A practical blueprint: how to evaluate an AI–energy partnership

Answer first: Treat AI–energy partnerships like a reliability program with financial KPIs, not a branding exercise.

If you’re a CTO, data center director, or cloud operations leader, here’s a due-diligence checklist that keeps the conversation grounded.

1) Define the outcome in grid terms

Start with metrics your utility and your finance team both respect:

- Target MW capacity and ramp requirements

- Expected annual MWh consumption

- Reliability target (e.g., redundancy level, outage tolerance)

- Interconnection milestones and critical path dates

If those numbers aren’t clear, partnership discussions will drift into vague “innovation” talk.

2) Model cost with the right level of detail

Look beyond average $/MWh:

- Demand charges

- Congestion and basis risk

- Curtailment assumptions

- Battery degradation and replacement schedules

- Backup fuel costs (where applicable)

A good partner will share modeling assumptions transparently.

3) Ask who owns the operational complexity

Energy assets require 24/7 operational discipline. Decide early:

- Who runs dispatch?

- Who handles NERC/ISO/RTO compliance if needed?

- Who is on the hook for performance guarantees?

This is where experienced developers earn their keep.

4) Plan for flexibility, not just capacity

The winners in AI infrastructure will be the operators who can flex:

- Shift workloads based on price and carbon

- Ramp usage without tripping local constraints

- Add storage or additional generation without renegotiating everything

Flexibility is the difference between “we have power” and “we can scale.”

Snippet-worthy reality check: If your data center strategy doesn’t include a power strategy, it isn’t a strategy—it’s a hope.

People also ask: quick answers about AI, data centers, and energy

Is renewable energy reliable enough for AI data centers?

Yes, when paired with the grid, storage, and smart operations. Data centers already rely on layered reliability (utility + UPS + generators). Adding renewables changes the supply mix, not the reliability engineering mindset.

Will AI make data centers more energy efficient?

AI can reduce waste, but it won’t cancel demand growth. Smarter cooling, predictive maintenance, and workload scheduling help—but rising AI usage is increasing total electricity consumption.

Why do partnerships matter more than buying power on the market?

Because time-to-power and interconnection drive time-to-revenue. Spot purchases don’t solve queue delays, infrastructure upgrades, or site readiness.

What to do next if you’re scaling AI workloads in the U.S.

The OpenAI–SoftBank–SB Energy partnership theme is a marker of where the market is headed: AI growth is becoming infrastructure-led. If you’re building or expanding AI-driven digital services, start treating energy as part of your platform architecture.

My recommendation is straightforward:

- Audit your power exposure (price volatility, demand charges, capacity constraints)

- Quantify flexibility (which workloads can move in time or location)

- Engage energy partners early (developers, utilities, storage providers)

- Instrument everything (from PUE to dispatch decisions)

The next 24 months will reward teams that can ship capacity fast without blowing up operating costs.

If you’re planning a new AI cluster or a data center expansion, what’s your current constraint—GPUs, grid interconnection, or ongoing energy cost?

Landing page: https://openai.com/index/stargate-sb-energy-partnership