AI data centers face power limits. Learn why nuclear is back in the conversation—and how to scale AI with credibility, efficiency, and better workload control.

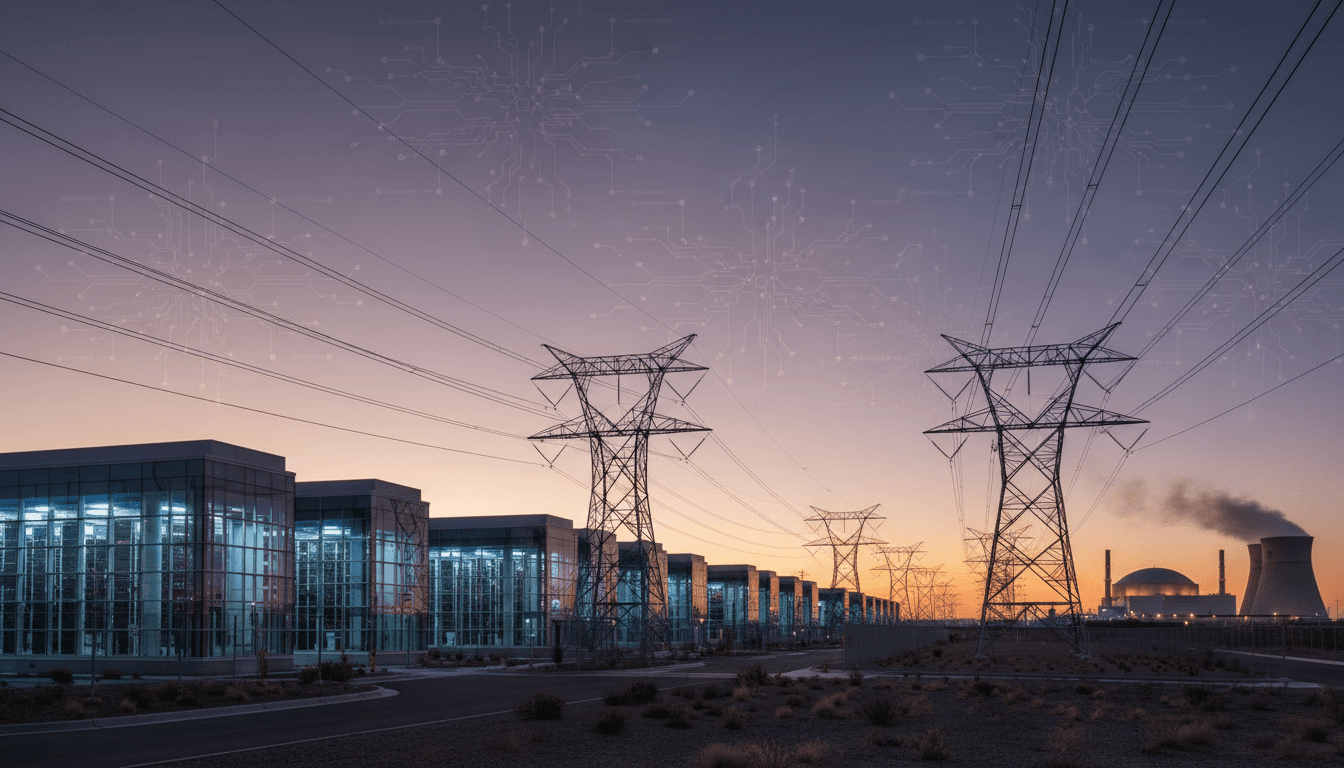

AI Data Centers Need Power: Nuclear vs. AI Hype

Most AI infrastructure talk skips the boring part: electricity. But in the US, data center power constraints are already shaping AI roadmaps, purchase decisions, and even where new facilities get built.

That’s why the recent chatter about next‑generation nuclear power for AI companies isn’t just a futuristic sidebar—it’s a realistic response to a very practical problem. At the same time, social media keeps rewarding the loudest AI claims (even when they’re shaky), creating a weird split-screen: serious infrastructure planning on one side, and hype-fueled “breakthroughs” on the other.

If you work in cloud computing, digital services, or anything that depends on reliable compute, this matters because it changes what “scaling AI” actually means in 2026: not just GPUs and models, but power procurement, grid strategy, and credibility.

Why AI infrastructure is running into an energy wall

AI growth is colliding with the reality that power is a first-class constraint for US data centers. You can order more servers; you can’t instantly add transmission lines, substations, and generation.

A useful mental model is this: for many organizations, the hard limit on AI isn’t the model architecture—it’s the megawatts available at the right place, with the right uptime, at the right price. Cloud providers and large enterprises are increasingly dealing with:

- Interconnection queues (waiting to connect large loads to the grid)

- Local transmission bottlenecks (power exists, but can’t be delivered reliably)

- Demand charges and peak pricing that punish spiky workloads

- Regulatory and permitting timelines that don’t match software timelines

And AI workloads are spiky. Training runs, batch inference, and periodic model refreshes can create massive peaks. That’s a nightmare if your energy strategy assumes smooth, predictable load.

The data center shift: from “location scouting” to “grid scouting”

Site selection is becoming grid-first. In the last few years, developers chased tax incentives and fiber; now they’re prioritizing proximity to generation, substation capacity, and speed-to-power.

If you’re a digital service provider selling AI features, this translates into an uncomfortable truth: your product roadmap may depend on your energy roadmap, even if you don’t own a single data center.

Why next-gen nuclear keeps coming up (and why it’s not a fantasy)

The most credible reason AI companies are betting on next-gen nuclear is simple: firm, carbon-free power at scale. Wind and solar are valuable, but without storage and transmission, they don’t reliably cover 24/7 high-load compute.

Nuclear—particularly small modular reactors (SMRs) and other advanced designs—gets pitched as a way to supply:

- High-capacity, steady baseload power

- Long asset lifetimes (a better match for long-lived data center campuses)

- Lower operational emissions, supporting corporate climate commitments

Do I think nuclear is the only answer? No. But I do think it’s one of the few options that aligns with what hyperscale AI actually needs: predictable power with predictable uptime.

The real obstacle isn’t physics—it’s timelines and risk

Nuclear is slow to deploy compared with cloud build cycles. Even “small” reactors face permitting, financing, supply-chain constraints, and public trust issues. The mismatch looks like this:

- Data center capacity planning: often 18–36 months

- Major grid upgrades: frequently 3–7 years

- New nuclear projects: can be many years, even with supportive policy

So why are AI companies still talking about it? Because the alternative is also slow. If transmission upgrades and firm generation are the bottleneck either way, locking in long-term firm capacity starts looking rational.

A practical hybrid approach: nuclear + grid + efficiency

The smartest infrastructure strategy I’m seeing (and advising toward) isn’t “bet everything on nuclear.” It’s:

- Aggressive efficiency (model, hardware, and workload optimization)

- Grid power with better contracts (long-term PPAs, capacity commitments)

- On-site or near-site firm power where feasible (gas with carbon strategy, nuclear in the longer term)

- Storage and demand response to smooth peaks

This layered approach matters for US digital services because customers are increasingly asking not just “Is it fast?” but “Is it reliable, and what does it cost when usage doubles?” Power is part of that answer.

The hype problem: social media rewards confidence, not correctness

AI hype spreads because the incentives are misaligned. Social platforms reward novelty, absolutist claims, and status signaling. And in AI, a confident post can outrun a careful paper by a factor of 100 in engagement.

The RSS source highlighted a telling moment: a public back-and-forth between prominent AI leaders after an overexcited claim about a large language model helping solve major unsolved math problems. The pushback—“This is embarrassing.”—wasn’t just internet drama. It was a reminder that:

The fastest way to lose trust in AI is to oversell what it can do.

For US tech businesses, hype has a direct operational cost:

- Procurement gets distorted (“We need GPT-5-level magic next quarter”)

- Roadmaps become performative (shipping demos instead of durable systems)

- Risk teams get ignored until a public incident forces attention

- Energy and compute planning break because usage forecasts are fantasy

The credibility gap shows up in infrastructure budgets

Here’s what I’ve found: infrastructure leaders don’t mind ambition. They mind unverifiable claims that drive multi-million-dollar decisions.

If your marketing says “our AI will replace X,” but your production system requires humans to constantly correct outputs, you haven’t built AI—you’ve built a labor reallocation engine with extra steps.

And when it comes to energy planning, exaggeration is dangerous. Overestimating adoption can leave you paying for unused capacity; underestimating it can leave you throttling customers.

What “real AI progress” looks like in cloud computing & data centers

Real progress in AI infrastructure is measurable: lower cost per inference, higher utilization, and better reliability under load. It’s not a viral claim; it’s a dashboard.

Below are concrete, practical levers that matter for teams building AI-powered digital services in the US.

Model efficiency is now an energy strategy

You can treat model optimization as an MLOps concern—or you can treat it as a power and profitability concern. The second framing is the one that wins budgets.

Practical moves:

- Right-size models: many workloads don’t need the largest model class

- Use distillation and quantization to cut inference cost

- Adopt retrieval-augmented generation (RAG) where accuracy matters more than “model vibes”

- Cache aggressively (especially for high-frequency, low-variance prompts)

Snippet-worthy truth: The greenest megawatt is the one your scheduler never requested.

Workload management: schedule like you pay for peaks (because you do)

Cloud and colocation bills increasingly reflect peak demand, and grids do too. So AI teams need to cooperate with infrastructure teams on scheduling.

Actions that usually pay off quickly:

- Batch non-urgent inference to off-peak windows

- Precompute for predictable workloads (recommendations, summaries)

- Set SLO tiers so not every request demands lowest latency

- Use queue-based architectures to smooth bursts

This is where the “AI in Cloud Computing & Data Centers” theme becomes real: intelligent resource allocation is both a cost-control method and an energy method.

Trust and safety: the unsexy competitive advantage

The RSS source also points to a broader cultural issue: AI claims tend to be unmoored from verification. In regulated or high-stakes industries, that’s not a branding issue—it’s a dealbreaker.

If you’re building AI features for US customers, implement these basics and say them out loud:

- Evaluation harnesses (accuracy, hallucination rates, refusal behavior)

- Monitoring and incident response for model drift

- Human-in-the-loop workflows where errors are costly

- Clear disclosure when users are interacting with AI-generated outputs

People want AI that works. They don’t want AI theater.

People also ask: what does nuclear have to do with AI data centers?

Nuclear comes up because AI data centers require firm, high-uptime power that renewables alone may not provide without massive storage and grid upgrades. Advanced nuclear is one proposed way to supply steady low-carbon electricity near large compute loads.

Is nuclear the fastest fix? No. Efficiency, better scheduling, and grid contracts move faster. But for long-term capacity planning—especially for large US AI campuses—nuclear is part of the serious conversation.

What should a mid-sized digital services company do today? Focus on efficiency and workload control first, then choose cloud regions and providers with credible power expansion plans and transparent reliability metrics.

What to do next (if you build AI-powered digital services)

If you want reliable AI at scale, treat power and credibility as product requirements. That means making infrastructure and communications decisions that hold up after the demo.

A practical checklist I’d use in Q1–Q2 2026 planning:

- Ask your cloud/provider partners how they’re addressing regional power constraints (not just GPU availability)

- Measure cost per successful task, not cost per token

- Build an internal “hype filter”: no production claim without eval results and error bars

- Design for burst control: queues, caching, tiered latency SLOs

- Track energy-related risk in the same register as security and compliance

The bigger question heading into 2026 isn’t whether AI will keep growing in the US digital economy—it will. The question is whether your organization will scale on infrastructure reality or on social-media narratives.

If your next AI feature suddenly doubled in usage, would your platform respond with graceful scaling—or with throttles, surprises, and a very expensive month-end bill?